Renewable energy owners are deploying AI faster than at any point in the industry's history. The tools are more accessible, the use cases are obvious and the pressure to improve portfolio performance is real. The problem is that most of what gets deployed doesn't deliver. Owners get dashboards and alerts, but the intelligence behind them is shallow. Trained on generic data and unable to contextualize what it sees, these tools produce outputs that require more manual validation than they save.

The value of any AI system is determined by two things: what it was built on, and the discipline of the people deploying it. Getting both right takes time. We knew that back in 2016, and today resonates stronger than ever.

Most owners are starting that journey now. A small number of teams have already spent several years on it. The difference in what those two groups can do for an asset owner is not marginal.

Why the Data Foundation Is Everything

A general-purpose AI has no frame of reference for what normal looks like at a 120 MW onshore wind site in Europe. It can process signals but it can't contextualize them. When a yield deviation appears, the AI flags it. Whether that deviation reflects a genuine underperformance, a regional weather pattern or a SCADA anomaly is something the owner has to determine manually. That defeats the purpose.

The same problem applies to benchmarking. Without a relevant peer group, AI can tell you what your portfolio is doing but not whether that's relatively good or bad. An owner facing an investor question about underperformance needs more than a signal. They need a defensible answer.

This is where model errors become expensive: confident but incorrect outputs that propagate through a reporting workflow before anyone catches them. In renewable asset management, where decisions carry financial and contractual weight, that cost is not theoretical.

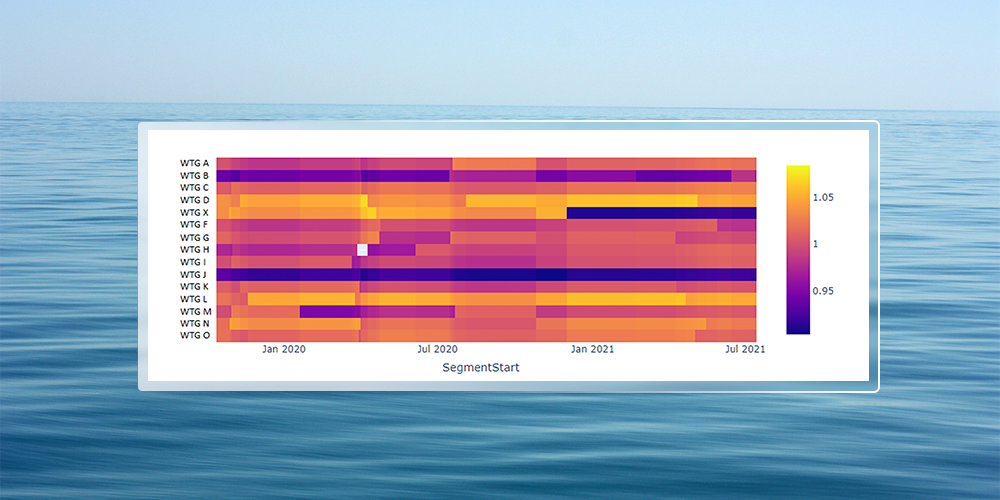

Building the data foundation that solves this takes years. It requires consistent access to operational data across asset types, geographies, and market conditions, and the investment to make it useful for benchmarking rather than just storage. Most owners are not positioned to build that foundation on their own timeline.

What Responsible AI Deployment Requires

Three things have to be true for AI to produce actionable, defensible intelligence.

First, the model needs to be grounded in industry intelligence that contextualizes real portfolio data to create a substantial baseline for the AI to succeed. A model trained on a few public datasets cannot meaningfully benchmark a real asset against relevant peers. Without that grounding, the model produces educated guesses rather than defensible intelligence.

Second, human judgment must remain in the loop. Responsible automation does not remove owners from the decision chain. It reduces the volume of noise they have to sort through, so their attention lands on the signals that matter. The job of AI in asset management is to surface the right issues at the right time, not to resolve them autonomously. The moment an AI system starts making consequential decisions without a human checkpoint, the accountability question becomes impossible to answer.

Third, someone in the organization has to own the governance. The owners who get consistent, defensible results from AI are not always the ones with the most sophisticated tools. They are the ones where leadership has defined what the AI is allowed to do, who is accountable for its outputs, and what happens when something goes wrong. Governance is not friction. It is what makes adoption durable.

When all three conditions are met, AI changes the dynamic between owners and their assets. Owners walk into quarterly reviews with their own independent intelligence rather than a filtered version of service provider reporting. They can ask the right questions because they already know what to look for. Asset owners can ask sharper questions because the data is independent and unambiguous. The conversation shifts from "trust us" to "here's what the data shows."

What It Means to Partner With a Team That Has Already Done This Work

Clir has been building this foundation for a decade. When an owner partners with Clir, they are not buying access to a dashboard. They are accessing trustworthy proprietary renewables intelligence built on 350+ GW of wind, solar, and BESS assets; one of the most extensive proprietary operational foundations in the renewable energy sector. That is a decade of work the owner does not have to do themselves. And it is what makes the benchmarking defensible rather than directional.

When it comes to security and compliance, although SOC 2 Type 2 doesn’t specifically reference AI, AI-related systems fall under these same security, confidentiality and privacy requirements. Clir continuously monitors its security and compliance practices through independent audits, including SOC 2 Type 2, to maintain the standards its customers expect.

Clir has also signed Canada's Voluntary Code of Conduct on Responsible Development and Management of Advanced Generative AI Systems, administered by Innovation, Science and Economic Development Canada, one of a group of companies in this sector to have made a formal governance commitment to a government body. For owners of renewables assets who face board-level questions about AI accountability, that governance posture is part of what the partnership provides.

The result is that owners come to board meetings and investor calls with independent intelligence they can defend: here is what the data showed, here is how it compares to relevant peers, and here is the decision we made and why. That capability used to take years to build. The right partnership makes it available from the start of the engagement.

The Owners Who Get This Right

A decade of working with AI in this sector has made one thing clear: the gap between owners who benefit from AI and those who don't comes down to three things: the quality of what the AI was built on, the governance framework behind how it is used, and whether leadership has defined accountability clearly.

The owners who get the most from AI are the ones who stop treating it as a reporting layer and start treating it as an independent intelligence function that runs continuously, benchmarks rigorously, and gives them the confidence to ask better questions of everyone in their portfolio.

The question for any owner reading this is not whether to use AI. It is whether to spend the next several years building the foundation from scratch, the data, the governance, the operational context, or to partner with a team that has already done that work and is doing it right now for portfolios like yours.

.png)